Imagine a customer who needs help on a Sunday night. Instead of digging through a help center, sitting in a queue, or pinballing between an FAQ bot and an overworked agent, they ask one question in chat - and the entire issue resolves in the next sixty seconds. The agent reads the ticket, pulls up the order, checks the policy, issues the refund, books the replacement window, sends the confirmation, and updates the CRM record. No handoff. No escalation. No "we'll get back to you within 24 hours."

That is what an AI customer support agent is supposed to do in 2026 - and for the first time, the underlying models can actually pull it off. The shift from chatbot to agent is not a marketing repositioning. It is the consequence of three things landing at once: long-context models that can hold an entire knowledge base in working memory, agentic tool-use that survives long multi-step workflows, and an open-weight cost curve that finally makes high-volume deployment economical.

This piece walks through what changed, why it matters for support teams, and how to actually ship an agent on Berrydesk that does the work - not just the talking.

From Scripted Chatbot to Acting Agent

A chatbot, in the traditional sense, was a routing layer in front of a knowledge base. It matched intent, retrieved a snippet, and either answered the question or punted the user to a human. When the question fell outside its training data, the conversation ended and a ticket got created. Useful for deflection metrics. Frustrating for customers.

An AI agent inverts that posture. It still talks, but talking is the smallest part of what it does. The agent reads the conversation, decides what action is needed, calls the right tools, observes the results, and continues until the user's actual problem is solved. "Can I return this item?" is no longer a query for the FAQ retriever. It is the start of a transaction the agent will run end-to-end: verify the order, confirm eligibility against the return policy, generate the shipping label, deduct restocking fees if applicable, schedule the refund through the payments processor, and write a clean note back to the CRM so finance can reconcile.

The model class doing this work in 2026 is fundamentally different from what shipped in earlier generations. Claude Opus 4.7 sits on top of SWE-bench Pro at 64.3% for complex multi-step coding workflows - a benchmark whose tool-use reliability translates directly to agent reliability in support. Kimi K2.6 can run autonomous coding sessions for twelve hours and orchestrate up to 300 sub-agents across 4,000 coordinated steps. GLM-5.1 runs an eight-hour plan-execute-test-fix loop. None of these were built for support specifically, but the agentic substrate they provide is exactly what a serious support deployment needs: an agent that does not lose its place after the third tool call.

That is the line that got crossed. Before it, "AI customer support" meant smarter retrieval. After it, it means closing tickets.

Why This Shift Is Reshaping Support Operations

Support has always been a throughput problem dressed up as a quality problem. Every team optimizes the same handful of numbers: time to first response, resolution time, cost per ticket, CSAT. Older chatbots improved one of those - first response - and barely moved the rest. Agents move all of them, because they are operating on the actual workflow, not on the conversation around the workflow.

Action, not just answers

The clearest change is that an agent executes. Reset a password, modify a subscription, change a shipping address, cancel a trial, escalate a fraud flag, refund a duplicate charge - all of these are bounded, well-specified operations that used to require a human to log into three different consoles. With Berrydesk's AI Actions, each becomes a typed function the agent can call. A customer asking about a refund no longer reads a paragraph explaining your policy; the agent applies it. The downstream effect on a support team is significant: agents who used to spend their day on repetitive transactions get redeployed to the cases that genuinely benefit from human judgment.

Conversation memory that survives across sessions

Earlier chatbots treated each session as a fresh start. The customer typed their order number for the fourth time that week. With long-context frontier models - Gemini 3.1 Ultra at 2M tokens, Claude Sonnet 4.6 and Opus 4.6 at 1M tokens with no surcharge, DeepSeek V4 Flash at 1M tokens - that constraint dissolves. An agent on Berrydesk can carry the full prior conversation history, the customer's account state, your active policies, and your full product documentation in-context simultaneously. RAG does not go away, but it becomes a tuning lever for cost and latency rather than a hard architectural requirement. For support specifically, that means context-aware interactions are no longer a roadmap item. They are the default.

Real integration into the systems that hold the truth

A support agent that cannot read your CRM is a guess machine. Berrydesk's integration model treats the connected systems - your help desk, your e-commerce platform, your subscription billing, your shipping provider, your data warehouse - as part of the agent's operating environment. When a customer asks where their package is, the agent does not generate plausible language about shipping. It calls the carrier API, reads the actual status, and reports it. When the customer wants to dispute a charge, the agent pulls the receipt, checks for refund eligibility against current policy, and either resolves it or escalates with the full case packaged for the human picking it up.

Proactive engagement, calibrated to context

Agents do not need to wait for a question to start working. A user lingering on the pricing page can be offered a short walk-through of the right plan for their team size. A customer whose subscription is auto-renewing in three days at a higher tier can be flagged before the charge, not after the chargeback. A first-time buyer asking about delivery can be quietly enrolled in the loyalty program once the order ships. Done well, this is helpful. Done badly, it is the chatbot equivalent of a pop-up ad. The difference is whether the agent is operating on real signal or just on time-on-page.

Multi-step problem solving that does not collapse halfway through

A meaningful share of support tickets are not single-question lookups. They are short workflows: "my order arrived damaged, I want a replacement, but I'm moving next week so can you ship to a different address, and I'm also a Pro subscriber so I think shipping should be free?" That is four operations, three lookups, and one policy check. The previous chatbot generation would have answered the first sentence and silently lost the rest. The agentic models - Claude Opus 4.7, Qwen3.6, MiMo-V2-Pro, Kimi K2.6 - were specifically trained on long tool-use trajectories. They hold the plan, execute steps in order, recover when an API call fails, and confirm the resolution at the end.

AI Agents vs. the Traditional Support Stack

The right comparison is not "AI versus humans." Most support orgs that deploy agents seriously end up with both, doing different work. The comparison that matters is what the AI side of that stack now looks like compared to a pure human-staffed team.

Speed and coverage

Human teams work in shifts, batch tickets, and have a hard ceiling on parallelism - one agent, one conversation. AI agents are always on, scale horizontally without hiring, and respond in seconds at any volume. For global businesses where the support load is distributed across time zones, that alone changes the operating model. A 50-person support team that used to need 24/7 coverage with three regional shifts can run a smaller core team during business hours and route off-hours volume to the agent layer - escalating only the cases that actually need a human.

Consistency

Two human agents reading the same policy can give different answers. They are not wrong, exactly - they are just calibrating differently against ambiguous cases. AI agents pull from the same canonical source every time, which makes the outputs auditable. When a policy needs to change, you change it once in the agent's knowledge base and the next conversation reflects it. You do not run a training session for thirty agents and hope it stuck.

Unit economics that finally pencil out

This is the part of the story that changed most in 2026. The arrival of frontier-class open-weight models from DeepSeek, Z.ai, Moonshot, MiniMax, Alibaba, and Xiaomi has collapsed the cost of high-quality inference. DeepSeek V4 Flash runs at $0.14 per million input tokens and $0.28 per million output. MiniMax M2 ships at roughly 8% of Claude Sonnet's price at twice the speed. For a typical support deployment, this means routine traffic - order status, password resets, basic FAQ - can be served at fractions of a cent per resolution, with Claude Opus 4.7, GPT-5.5 Pro, or Gemini 3.1 Ultra reserved for the harder escalations where reasoning quality is worth the premium. Berrydesk's model picker lets you wire that routing explicitly: pick the right model for each conversation type rather than paying frontier prices for "what's your refund policy."

Personalization grounded in actual data

A returning customer should not have to repeat their issue. Agents with access to CRM history, prior tickets, and account state can pick up where the last conversation left off - and can pull in signals the customer has not explicitly mentioned, like a known shipping issue affecting their region or an open ticket their account already has. This is the kind of personalization that human agents can theoretically deliver but rarely have time to assemble in real time. For an AI agent, it is one tool call.

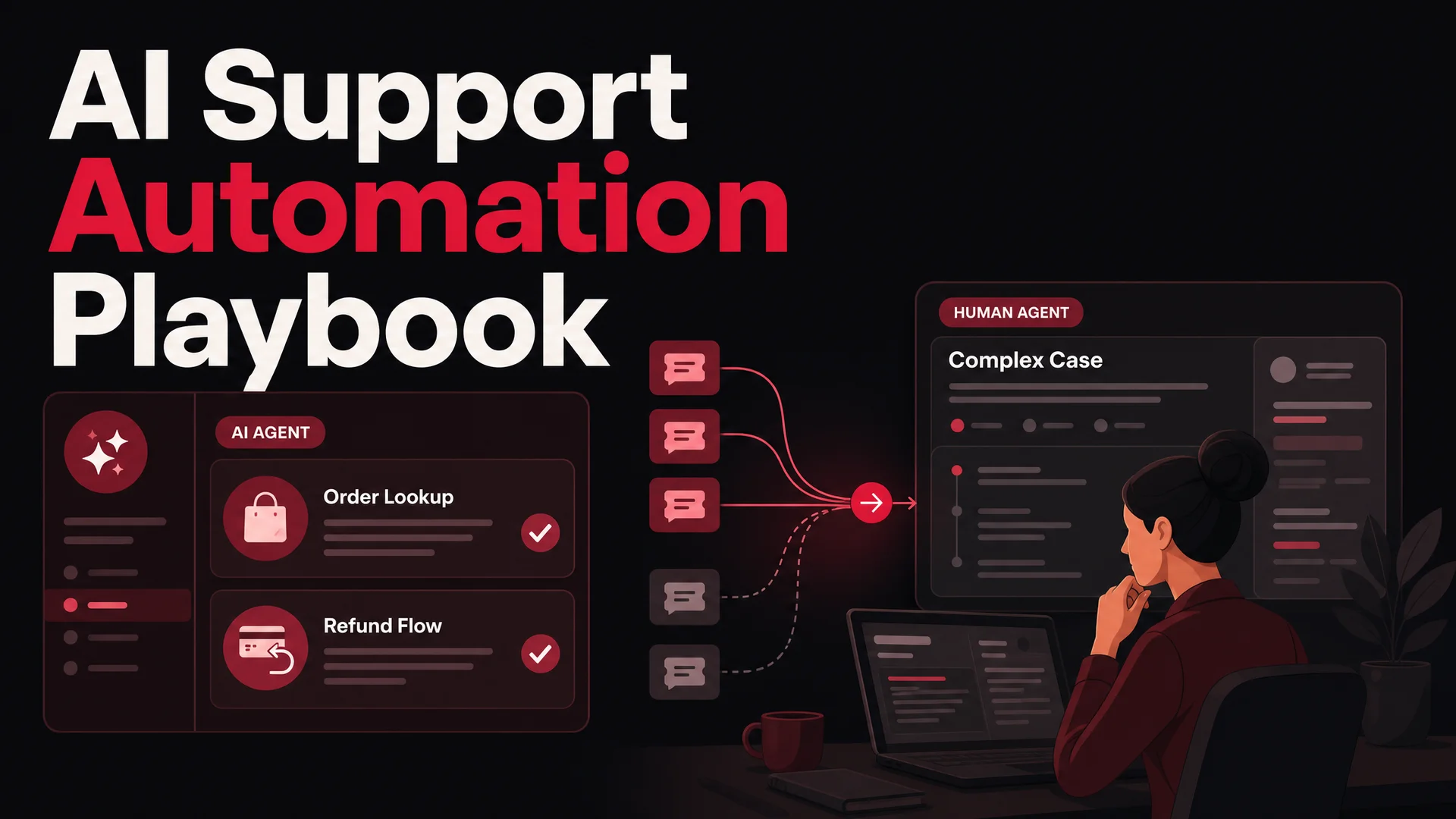

Hybrid by design

The healthiest deployments treat the agent as the front line and the human team as the specialist layer. The agent handles volume, surfaces the unusual, and packages context for the human when it escalates. The human gets a richer brief than they ever did in a tier-one queue: full conversation transcript, what the agent already tried, account state, and a short summary of the open question. That handoff quality is what makes the hybrid model land - not the AI, and not the human alone.

Where AI Support Deployments Go Wrong

Before getting into the build, it is worth being honest about the failure modes. Most struggling deployments share a few patterns.

The first is undertrained tool use. Teams plug a model into a knowledge base and call it an agent without giving it real actions to take. The result is a smarter FAQ bot - useful, but not what was promised. Agents earn their keep by doing things, not by retrieving things.

The second is missing escalation paths. An agent that cannot hand off cleanly will eventually be put in front of a case it should not handle, and the customer experience collapses. A good escalation path is fast, automatic, and preserves context - the human who picks up the ticket should not have to re-read the whole conversation.

The third is set-and-forget deployment. Models drift, products change, policies update, and the agent's behavior needs to track those changes. Teams that treat the agent as a launched-and-done project see quality decay within a couple of months. Teams that review transcripts weekly, refine prompts, and update training sources keep getting better.

The fourth is over-reliance on a single model. Frontier models have different strengths - Claude Opus 4.7 for complex reasoning, Gemini 3.1 Pro for multimodal and long-context, DeepSeek V4 Flash and MiniMax M2 for cheap high-volume traffic, GLM-5.1 and Qwen3.6 for agentic workflows in regulated or air-gapped environments where MIT/Apache-licensed open weights are a hard requirement. A serious deployment routes by ticket type and risk profile. Berrydesk is built for that pattern.

Building an AI Support Agent on Berrydesk

The setup is intentionally short. The interesting work happens after launch, not during.

Step 1: Create the agent and pick the model

Sign up at berrydesk.com, open the dashboard, and create a new agent. The first decision is which model backs it - and Berrydesk gives you the full menu. GPT-5.5 and GPT-5.5 Pro for the OpenAI stack with parallel reasoning. Claude Opus 4.7 or Sonnet 4.6 if you want the strongest tool-use reliability and a native 1M-token window. Gemini 3.1 Ultra or Pro if you need 2M-token context or native multimodal handling for image and video tickets. DeepSeek V4, Kimi K2.6, GLM-5.1, Qwen 3.6, or MiniMax M2 if cost or open-weight licensing matters. You can pick one for the whole agent, or assign different models to different conversation types later.

Name the agent something your team will recognize on the dashboard. The name your customers see in the widget is set separately during branding.

Step 2: Connect the knowledge sources

The agent's quality is bounded by what it knows. Berrydesk supports multiple training sources, and most production deployments use several of them in combination.

Document upload. Drop in product manuals, return policies, warranty documents, internal SOPs, escalation guidelines, anything PDF or text-based. The agent indexes them and uses them for grounded responses.

Web crawl. Point Berrydesk at your public help center, product documentation site, or pricing page. The crawler pulls structured content and respects updates so the agent stays in sync as docs change.

Notion and Google Drive. If your team's living documentation is in Notion or Drive, connect those workspaces directly. Agents trained on those sources update as the docs do - there is no re-import cycle.

YouTube transcripts. Useful for product demo content, walkthrough videos, and conference talks where the canonical explanation lives in spoken form.

Q&A pairs. For high-stakes questions where you want an exact answer - pricing edge cases, regulatory disclosures, refund policy specifics - write the question and the canonical answer manually. The agent will prefer those over inferred responses.

A good rule of thumb: if a new hire would learn it during onboarding, the agent should have it.

Step 3: Wire up AI Actions

This is where the deployment graduates from "smart FAQ" to "agent." AI Actions are the typed functions the agent can call. Berrydesk ships a set of common ones and lets you define your own.

The standard set covers most teams: lead capture during conversations, Slack alerts to the right channel for urgent cases, calendar booking through Cal.com or your own scheduler, payment links for upsells or retention offers, web search for live information the knowledge base does not cover.

Custom actions are where teams differentiate. An e-commerce deployment usually wires up order lookup, refund initiation, and shipping address changes. A SaaS deployment connects subscription modification, plan upgrades, and seat management. A fintech deployment exposes balance checks, dispute filing, and KYC-aware account changes. Each action is defined once and the agent learns when to call it from your prompt and example conversations.

The agentic models in 2026 - particularly Claude Opus 4.7, Kimi K2.6, GLM-5.1, and MiMo-V2-Pro - are robust enough that a well-specified action with clear input types and a meaningful description will get called correctly even on novel phrasings of the request. This is what changed compared to earlier model generations, where tool-use was brittle and required heavy guardrails.

Step 4: Brand, deploy, and route

Customize the widget - colors, avatar, suggested prompts, post-conversation forms - to match your brand. Then decide where the agent lives. Most teams start with the website widget and expand. Berrydesk supports deployment to Slack and Discord for community-led products, WhatsApp for businesses with messaging-heavy customers, and email for traditional support inboxes. The same agent runs across all of them, so a customer who starts on chat and follows up over WhatsApp does not lose context.

If you want different agents for different surfaces - a higher-touch sales agent on the pricing page, a faster transactional agent on the checkout flow - you can spin those up as separate agents and route accordingly.

Step 5: Measure, refine, and route by model

Berrydesk's analytics surface the metrics that actually matter: resolution rate, deflection rate, escalation rate, average conversation length, customer satisfaction. Watch them weekly for the first month. The patterns will tell you what to fix.

Look for conversation types where the agent is escalating too often - those are usually missing knowledge base content or missing actions. Look for conversation types where the agent is closing too quickly - those are sometimes false positives where the customer gave up rather than got resolved. Look at cost per resolution by model and route accordingly: if your billing-question conversations resolve cleanly on DeepSeek V4 Flash, there is no reason to be paying GPT-5.5 Pro rates for them. If your fraud-related cases benefit from Claude Opus 4.7's stronger judgment, route those there explicitly.

The teams that get the most out of an AI support deployment treat it like any other piece of operational infrastructure. They review it. They tune it. They expand its scope as it earns trust. Six months in, they are running a meaningfully different support organization.

If you are evaluating AI support agents in 2026, the question to ask is not "can it answer questions" - every product clears that bar. The question is whether it can take action, hold context across long workflows, route intelligently across the model mix, and integrate cleanly with the systems where the real work happens. That is what Berrydesk is built for. Start at berrydesk.com and have a working agent in front of customers by the end of the day.

Launch a support agent that actually closes tickets

- Pick from GPT-5.5, Claude Opus 4.7, Gemini 3.1, DeepSeek V4, Kimi K2.6 and more - route by ticket type

- Wire up refunds, bookings, and order lookups as AI Actions in minutes, not sprints

Set up in minutes

Chirag Asarpota is the founder of Strawberry Labs, the team behind Berrydesk - the AI agent platform that helps businesses deploy intelligent customer support, sales and operations agents across web, WhatsApp, Slack, Instagram, Discord and more. Chirag writes about agentic AI, frontier model selection, retrieval and 1M-token context strategy, AI Actions, and the engineering it takes to ship production-grade conversational AI that customers actually trust.