AI agents are quietly running more of the internet than most people realize. They are sitting behind your streaming recommendations, steering autonomous taxis through downtown traffic, scoring loan applications overnight, and resolving customer support tickets while your team sleeps.

But the phrase "AI agent" hides a huge amount of variety. The chatbot that answers a refund question is a fundamentally different machine from the model that plans a multi-step booking flow, even if both wear the same label in a marketing deck.

The five canonical types of AI agents stretch from rigid if-this-then-that systems all the way up to self-improving learners that get sharper with every conversation they have. Knowing which one you are actually buying - or building - matters, because picking the wrong tier costs money on the way up and costs trust on the way down.

This guide walks through each of the five types of agents in artificial intelligence, with concrete examples drawn from customer support and adjacent industries. By the end, you will be able to look at any "AI" pitch and place it on a clear ladder, which is the first step to choosing the right tool for the job in front of you.

Let's get into it.

What Counts as an AI Agent in the First Place

An AI agent is not just any program that takes input and produces output. A spreadsheet does that. A REST endpoint does that. What separates an agent is the way it engages with its environment.

Compare a four-function calculator to the spam filter on your inbox. The calculator waits passively for keystrokes and runs deterministic math. The spam filter watches a continuous stream of mail, learns what you flag and what you read, and quarantines messages without asking. Same hardware, completely different posture toward the world.

Three properties show up in every useful definition. Autonomy means the system acts without a human in the loop for every decision - your inbox does not ping you to ask whether each newsletter is junk. Reactivity means it perceives changes and responds to them - a market-making bot sees the order book move and adjusts its quotes within milliseconds. Proactivity means it pursues goals rather than waiting to be told what to do - an in-game opponent in a chess engine is not waiting for your next move to start planning, it is building its own tree of options.

Within those three properties is a wide spectrum. A motion-activated light is technically an agent, and so is the model-routing fabric inside Berrydesk that picks Claude Opus 4.7 for a billing escalation and DeepSeek V4 Flash for a "what are your hours" question. They share an essential pattern but differ enormously in what they can actually do. The five-type taxonomy below is the most useful map of that spectrum.

The Five Types of AI Agents at a Glance

The classic taxonomy comes from Russell and Norvig's textbook on AI, and it has held up well even as the underlying tech has shifted from rule engines to trillion-parameter mixture-of-experts models. Each category is defined by how the agent perceives, decides, and acts - not by how fancy its underlying model is.

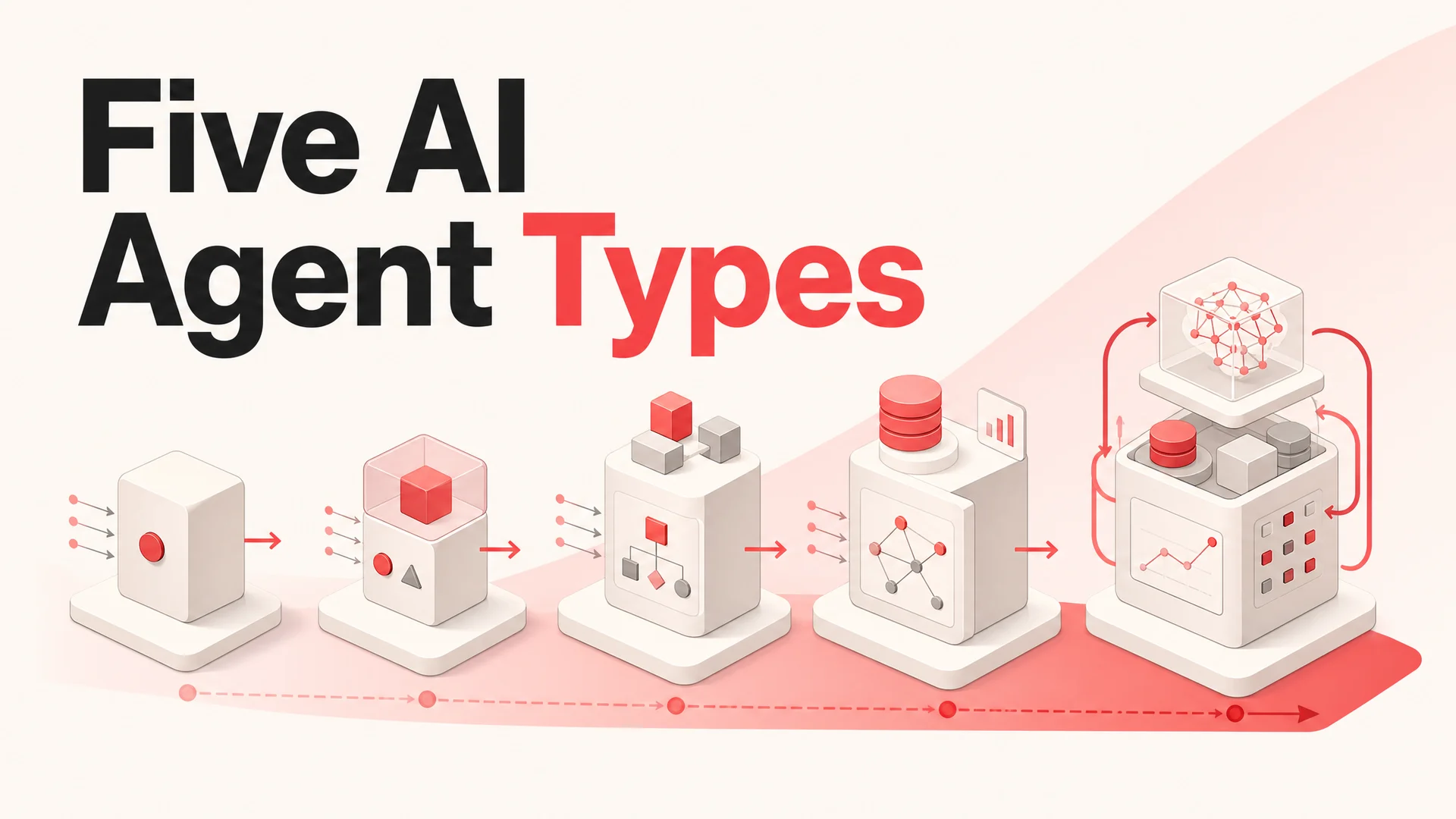

Here is the short version, before we go deep on each:

- Simple reflex agents react to the present moment with hardcoded rules.

- Model-based reflex agents add an internal map of the world so they can act when they cannot see everything.

- Goal-based agents plan ahead toward an explicit objective.

- Utility-based agents weigh trade-offs and pick the option with the highest expected value.

- Learning agents improve themselves over time from experience and feedback.

Each tier subsumes the one below it. A learning agent typically still has a goal, still keeps an internal model, and still reacts to the present - it just adds a self-improvement loop on top.

1. Simple Reflex Agents

Simple reflex agents are the most primitive form. They operate on a strict condition-action loop: read a sensor, check a rule, perform an action, repeat. There is no memory, no anticipation, and no concept of context beyond the current input.

Mechanically, the agent perceives the world through a sensor or input channel, looks up the perception in a table of if-then rules, and emits the matching action. If the lookup misses, the agent either does nothing or fires a default behavior. There is no notion of "what just happened a second ago" or "what might happen next."

Real-world examples

Automatic doors trigger their motors the moment a motion sensor sees movement. They have no idea who walked through five seconds ago, no memory of yesterday's foot traffic, and no model of whether the building is open. They just react.

Keyword-only chatbots scan an incoming message for trigger words and pull the matching canned response. Ask the same question three times and you get the same scripted answer three times, because there is no record of the previous turn. This is what most "chatbots" looked like before LLMs, and there are still plenty of them in the wild.

Old-school thermostats are pure reflex agents. Below 68°F, run the heater. Above it, stop. They cannot tell winter from summer, learn your schedule, or care that you left for the weekend.

These are textbook AI examples of automation that does not actually need intelligence to function - and that is fine, as long as the problem really is that simple.

Strengths

- Speed. Decisions are essentially free, which is why reflex logic still runs inside critical-path systems like circuit breakers and airbag controllers.

- Cheap to operate. No memory, no model, no inference cost.

- Utterly predictable. In a stable environment, the behavior is provable and auditable.

Limitations

- No context. They cannot handle situations the rule table did not anticipate.

- Brittle when the world drifts. Move the sensor, change the input distribution, and behavior collapses.

- Stuck in loops. A reflex agent that runs into a wall and keeps firing the "go forward" action will sit there until something else intervenes.

Where they fit

Use simple reflex agents when the environment is small, stable, and well-understood, and where speed matters more than nuance. They are the right tool for a triage layer that classifies an inbound ticket as "billing" vs "technical" before passing it to a smarter model, or for a hard guardrail that blocks any message containing a banned phrase before it reaches a customer.

They are the wrong tool for anything that involves dialogue, ambiguity, or judgment.

2. Model-Based Reflex Agents

Model-based reflex agents add the missing ingredient: memory. Specifically, they maintain an internal model of the world that gets updated each time they perceive something new. That model lets them reason about parts of the environment they cannot directly see right now.

Think of it like keeping a sketch of a room in your head while you walk through it with your eyes closed. Each step, you update the sketch based on what you bumped into and how you moved. The sketch is never perfect, but it is good enough to navigate.

The leap from simple reflex to model-based reflex is the leap from "react to what is in front of me" to "react to my best estimate of what is actually going on."

Real-world examples

Robotic vacuums like the high-end Roborock and iRobot models do not just bounce off walls. They build a SLAM map of your home, mark which rooms are clean, and remember where the dock is even when they cannot see it. The action they take next depends on the model in their head, not just the bumper that just got triggered.

Smart home security systems learn the rhythm of a household - when doors open, when lights come on, when the dog walker arrives. When the kitchen door opens at 3 a.m. and the model says "this is unusual," they escalate to an alert.

Warehouse sortation robots scan a barcode at one end of a conveyor and act on the package three seconds later at the other end, even though the box has long since left the scanner's view. The internal model carries the package's identity through the gap.

Strengths

- Robust to missing information. The agent keeps acting sensibly when a sensor is blocked or noisy.

- Context-aware. Decisions reflect a running picture of the world, not a single snapshot.

- Avoids redundant work. A vacuum that knows it cleaned the kitchen does not clean it again on the same run.

Limitations

- Heavier to run. Maintaining and updating the world model takes memory and compute.

- Model drift. If reality changes - someone moves the couch - and the agent does not update fast enough, it will confidently do the wrong thing.

- Hidden bugs. Errors in the model can be subtle and slow to surface, because the agent looks like it is working until it suddenly is not.

Where they fit

Anything involving navigation, tracking, or multi-step processes where state matters benefits from a model. Hospital monitors that track a patient's vitals over time are model-based agents. So is the inventory tracker that reasons about stock levels between physical counts.

In customer support, a model-based reflex layer is what lets a chatbot remember that a user is mid-checkout and tailor its answers to the cart they are holding, rather than treating every message as a cold start.

3. Goal-Based Agents

Goal-based agents bring planning into the picture. Instead of just reacting to what is happening, they look ahead at possible futures and choose actions that move them toward an explicit goal.

The internal monologue of a goal-based agent looks like: "If I take action A, the world will probably look like X. If I take action B, it will probably look like Y. Y is closer to my goal, so I will do B." This requires both a model of the world (inherited from the previous tier) and a search procedure that explores possible action sequences.

The big practical advantage is that you can change the goal without rewriting the agent. The same self-driving stack that takes you to the airport can take you to the grocery store, because the destination is a parameter, not hardcoded behavior.

Real-world examples

Self-driving systems plan a route, evaluate alternatives in real time as traffic shifts, and replan when an obstacle blocks the original path. The destination is the goal; the planner does the rest.

Game-playing engines from chess to Go to StarCraft search through possible move sequences and pick the one most likely to lead to victory. Modern engines like AlphaZero blend goal-based search with learning, which we will get to in tier five.

Adaptive fitness apps take a user goal - lose ten pounds, run a 5K, build muscle - and generate a workout plan, then revise the plan when the user falls behind or pulls ahead.

Strengths

- Goal-flexibility. Changing the objective does not require rewriting code, just supplying a new target.

- Forward-looking. The agent can avoid actions that look fine right now but lead to a dead end.

- Strong at problem-solving. When a path closes, a goal-based agent can find another way through instead of giving up.

Limitations

- Search is expensive. Looking many steps ahead means evaluating exponentially many futures.

- Slower than reflex. Planning takes time, which matters for latency-sensitive use cases like voice.

- Model accuracy is critical. If the world model is wrong, the plan will be wrong in confidently elaborate ways.

Where they fit

Goal-based agents shine when there are multiple legitimate ways to reach an outcome and the right path depends on the current state. Trip planning, project orchestration, and complex agentic tool-use workflows - like a support agent that needs to look up an order, check shipping status, issue a partial refund, and email a confirmation - all fit this pattern.

The new generation of agentic models is built around exactly this loop. Moonshot's Kimi K2.6, released in April 2026, runs autonomous coding sessions of up to 12 hours and orchestrates swarms of up to 300 sub-agents across 4,000 coordinated steps. Z.ai's GLM-5.1 runs an 8-hour plan-execute-test-fix loop and scores 58.4 on SWE-Bench Pro, beating Claude Opus 4.6 on that benchmark. These models effectively are goal-based agents in production form, and they are what makes AI Actions in tools like Berrydesk - booking, refunds, payment flows, order lookups - actually work end-to-end rather than fall apart on the third step.

4. Utility-Based Agents

Utility-based agents go a step beyond goal-based ones. They do not just want to reach a goal - they want to reach the best of several acceptable goals, weighing competing factors against each other.

The mechanism is a utility function: a numerical score that captures how desirable a particular outcome is. The agent simulates possible actions, estimates the resulting world states, scores each one with the utility function, and picks the action whose expected utility is highest.

Where a goal-based agent is happy with any path that reaches the destination, a utility-based agent asks: which path is the best? Cheaper? Faster? Safer? More comfortable? You define the trade-offs, the agent optimizes them.

Real-world examples

Flight search engines balance price, layovers, total travel time, departure window, baggage policy, and dozens of other factors. The "best" flight depends on a utility function tuned to each user, which is why personalized search has become a wedge against generic comparison sites.

Dynamic pricing engines at Uber, Lyft, hotel chains, and airlines do not just pick a goal price - they continuously weigh supply, demand, weather, time, competitor prices, and predicted churn to find the price that maximizes total value to the platform.

Portfolio managers, both human and algorithmic, balance return against risk. The objective is not "make the most money" - it is "achieve the best risk-adjusted return given the client's tolerance and time horizon," which is a utility function in plain English.

Strengths

- Trade-off aware. They are the right tool whenever "good enough" is not good enough and you need to find the optimum.

- Handles uncertainty. Probabilistic utility lets the agent reason about expected value when outcomes are not guaranteed.

- Tunable. Adjust the weights in the utility function and the agent's behavior changes accordingly, no retraining required.

Limitations

- Garbage in, garbage out. A utility function that does not capture what humans actually care about will produce technically optimal but practically wrong choices.

- Compute-heavy. Scoring many futures across many factors adds up quickly.

- Hard to specify. Encoding fuzzy human preferences like "feels safe" or "doesn't sound robotic" as numbers is genuinely difficult, and small mis-specifications can compound.

Where they fit

Pricing, routing, capacity planning, ad bidding, and any kind of constrained resource allocation are natural homes for utility-based agents. In support, a utility-based router decides which model to send each ticket to: route routine "where is my order" questions to a cheap, fast model like DeepSeek V4 Flash at $0.14 per million input tokens, and reserve Claude Opus 4.7 at the top of SWE-Bench Pro (64.3%) for the gnarly escalations that justify the cost. The utility function balances cost per resolution, latency, and answer quality - exactly the kind of multi-axis optimization this tier is built for.

5. Learning Agents

Learning agents sit at the top of the hierarchy. They include everything from the previous tiers and add a feedback loop that lets them improve over time. Their behavior is not fixed at deployment - it evolves as they encounter new situations and receive signals about what worked and what did not.

The classical breakdown has four pieces. The performance element is the part that actually decides and acts. The critic evaluates how well the actions performed against some standard. The learning element uses that evaluation to update the performance element. And the problem generator suggests novel actions to try, so the agent does not get stuck in a local optimum exploiting what it already knows.

The practical upshot: a learning agent can discover strategies its designers never thought of, and it can keep getting better long after the original engineers have moved on to other projects.

Real-world examples

Self-driving fleets treat every disengagement - every time a human takes the wheel - as a labeled training example. The fleet acts as a giant problem generator, sampling rare and weird scenarios that no simulator could enumerate. Over time, the underlying model improves on exactly the situations where it used to fail.

Frontier LLMs like GPT-5.5, Claude Opus 4.7, and Gemini 3.1 Ultra are the most visible learning agents on the planet. Reinforcement learning from human feedback (RLHF), constitutional AI, and newer reward-modeling techniques act as the critic; user preferences, helpfulness scores, and safety evaluations train the next generation of weights. Open-weight contenders like DeepSeek V4, Kimi K2.6, Qwen 3.6, MiniMax M2.7, and Xiaomi MiMo-V2-Pro are pushing the same loop in public, with the additional twist that anyone can take the open weights and continue training on their own data.

Customer support agents with feedback hooks are a smaller but very practical example. When a deployed agent asks "did this answer help?" and uses the response - combined with whether the conversation was escalated, whether the user came back, and whether the ticket reopened - to fine-tune retrieval rankings, prompt structure, or routing policies, it is a learning agent in the textbook sense.

Strengths

- Adapts to change. When the underlying environment shifts, a learning agent updates rather than breaking.

- Handles the unforeseen. It can deal with scenarios the original designers never anticipated.

- Compounds. Quality improves over time without requiring engineers to write new code for every new situation.

- Discovers novel strategies. Sometimes the agent finds a better approach than its creators ever imagined.

Limitations

- Data-hungry. Real learning needs many examples and high-quality feedback, which most organizations underestimate.

- Expensive to train. Frontier-scale training is a multimillion-dollar endeavor; even fine-tuning is non-trivial.

- Can learn the wrong lesson. Biased feedback produces biased agents, and reward hacking - gaming the metric instead of solving the underlying problem - is a constant risk.

- Requires governance. Learning agents drift, and drift can be subtle. You need monitoring, evaluation, and the ability to roll back.

Where they fit

Anywhere the environment changes faster than humans can rewrite rules, and where you have a real feedback signal to learn from. Search ranking, recommendation systems, fraud detection, autonomous vehicles, and AI customer support all fit. The same Berrydesk agent that handles a thousand conversations a day is generating exactly the kind of structured feedback - resolutions, escalations, thumbs up and down, follow-up tickets - that a learning loop can use to improve.

What to Watch Out For

Choosing the wrong tier is the most expensive mistake teams make with AI agents, and it goes both directions.

Over-engineering. A reflex agent solves "route this ticket to the right queue" perfectly well. Building a learning agent for the same job adds cost, latency, governance burden, and a long list of failure modes you did not have before. Match complexity to the actual decision being made, not to what looks impressive in a board deck.

Under-engineering. On the other side, trying to fake a goal-based or learning agent with rule tables is a common death spiral. Every new edge case becomes another rule, the rule table becomes unmaintainable, and the team ends up rewriting it from scratch on a modern model anyway. If the problem genuinely requires planning or adaptation, build for that from the start.

Mis-specified utilities and goals. Both goal-based and utility-based agents are only as good as the targets you give them. A support agent optimized purely for "shortest conversation" will hang up on hard problems. A pricing agent optimized purely for "maximize revenue this quarter" will torch retention. Spend more time on the objective than feels comfortable.

Drift in learning systems. Learning agents need monitoring or they will quietly degrade - by overfitting to recent traffic, by learning from a feedback loop that is itself biased, or by drifting toward whatever the loudest users want regardless of business intent. Treat the eval pipeline as part of the product, not an afterthought.

Open-Weight vs Closed Frontier: A Quick Trade-Off

The other big decision in 2026 is which model family to build your agent on. The landscape has shifted faster in the last twelve months than in the previous three years combined.

Closed frontier models - GPT-5.5 and 5.5 Pro, Claude Opus 4.7 and Sonnet 4.6, Gemini 3.1 Ultra and Pro - still set the ceiling on raw quality, especially on the hardest reasoning and tool-use tasks. Claude Opus 4.7 leads SWE-Bench Pro at 64.3%; Gemini 3.1 Pro leads GPQA Diamond at 94.3%; Sonnet 4.6 ships a 1M-token context window with no surcharge. If your support agent absolutely needs the best available reasoning on a billing dispute or a regulated medical question, this is where you go.

Open-weight frontier models - DeepSeek V4, Kimi K2.6, GLM-5.1 (MIT license), Qwen 3.6 (Apache 2.0 for the dense and 35B-A3B variants), MiniMax M2.7, and Xiaomi MiMo-V2-Pro (MIT) - have collapsed the cost of running production agents and made on-prem and air-gapped deployments genuinely viable. MiniMax M2.7 ships at roughly 8% the price of Claude Sonnet at 2x the speed; DeepSeek V4 Flash is $0.14 / $0.28 per million input/output tokens. For routine traffic, the per-resolution cost has dropped to fractions of a cent.

The right answer for most support deployments is not "pick one." It is to build a utility-based router on top of a learning agent: use a cheap open-weight model for the 80% of conversations that are routine, route the long tail to a frontier closed model, and feed every outcome back into the system so it gets better at routing and at answering. That is how Berrydesk customers run agents that handle five-figure ticket volumes without burning a five-figure model bill.

Bringing It Back to Customer Support

If you map the five tiers onto a real support deployment, you can see why "AI customer support" is not a single product - it is a stack.

A reflex layer enforces hard rules: profanity filters, never-discuss topics, instant escalation triggers. A model-based layer keeps track of the customer's session, cart, recent tickets, and entitlements. A goal-based layer plans and executes multi-step actions through tool calls - looking up an order, generating a refund, scheduling a callback. A utility-based layer routes each turn to the right model and decides when to escalate to a human. And a learning layer closes the loop, using outcomes to improve every other layer over time.

You do not need to build all of that yourself. That stack is exactly what Berrydesk gives you out of the box, with your choice of model - GPT, Claude, Gemini, DeepSeek, Kimi, GLM, Qwen, MiniMax, and more - your data sources (docs, websites, Notion, Google Drive, YouTube), your branding on the widget, your AI Actions for bookings and payments, and deploys to the channels your customers actually use, from your website to Slack, Discord, and WhatsApp.

The five-type taxonomy is useful precisely because it tells you which questions to ask. Is the decision context-free, or does it require memory? Does it need planning, or just reaction? Is there a real trade-off to optimize, or just a goal to hit? Is the environment stable, or does it change underneath you?

Once you can answer those questions for your own support workflow, picking the right kind of agent - and the right place to deploy it - gets a lot easier. If you would like to skip the integration work and just get an agent live, start a free Berrydesk workspace and you can be answering tickets the same afternoon.

Launch your AI agent in minutes

- Pick GPT-5.5, Claude Opus 4.7, Gemini 3.1, DeepSeek V4, Kimi K2.6 or any model - switch any time

- Train on docs, sites, Notion, Drive, or YouTube and deploy to web, Slack, Discord, or WhatsApp

Set up in minutes

Chirag Asarpota is the founder of Strawberry Labs, the team behind Berrydesk - the AI agent platform that helps businesses deploy intelligent customer support, sales and operations agents across web, WhatsApp, Slack, Instagram, Discord and more. Chirag writes about agentic AI, frontier model selection, retrieval and 1M-token context strategy, AI Actions, and the engineering it takes to ship production-grade conversational AI that customers actually trust.