The numbers around AI in customer support have stopped being speculative. After three years of frontier-model leaps, plus a 2026 wave of open-weight models from DeepSeek, Z.ai, Moonshot, Alibaba, MiniMax, and Xiaomi, the question is no longer whether AI belongs in your help desk - it is how much of the work it should be doing, and which model should be doing each piece.

This post pulls together twenty statistics that frame where the industry actually sits today: who is using AI, what it is solving, what it is costing, and where the gains show up in CSAT, handle time, and churn. We have grouped them into adoption, performance, and outcomes, and added a layer most stat roundups skip - what the May 2026 model landscape (1M-token context windows, agentic tool use, sub-cent-per-resolution open weights) means for each number on the list.

If you are a support leader trying to build the case for an agent platform, or an operator trying to size the opportunity for your own team, this is the snapshot to start from.

Adoption: AI is the default, not the experiment

1. 56% of business owners use AI for customer service tasks

Customer service has held the top slot in business AI use cases for two years running, ahead of marketing, HR, and finance. The reason is structural: support has high volume, repeatable patterns, clear success signals (resolution, CSAT, escalation), and a workforce already used to scripted workflows. That is the perfect substrate for an LLM to operate in.

2. 80% of companies use AI in customer interactions; 95% of decision-makers say it cuts cost and time

This is the headline number. AI in support has crossed the chasm - it is now mainstream infrastructure, not an innovation pilot. The 95% confidence figure on cost and time savings tracks with what we hear from Berrydesk customers: a deployment that routes routine traffic to a cheap open-weight model like DeepSeek V4 Flash ($0.14 / $0.28 per million input/output tokens) and reserves Claude Opus 4.7 or GPT-5.5 for the hard escalations typically pays back in the first billing cycle.

3. 90% of Fortune 500 companies invest in AI for customer service

When the largest support organizations in the world commit budget at this level, it tells you the technology has cleared the procurement, security, and reliability bars that used to stop enterprise rollouts. The recent arrival of MIT- and Apache-licensed Chinese open weights - GLM-5.1, Qwen3.6-27B, MiMo-V2-Pro - extends that bar to regulated industries that need on-prem or air-gapped deployments.

4. 75% of small and midsize businesses now use AI in customer service

Adoption used to lag at the SMB tier because the cost of integration outpaced the value of automation at low ticket volumes. That gap has closed. A lean operator can spin up a Berrydesk agent in an afternoon, point it at a help center URL, and start deflecting tickets before a custom build would have finished its first sprint.

5. 67% of contact centers plan to increase AI budgets next year

Two-thirds of contact centers expanding spend, not maintaining or cutting, is a signal that the early returns are real. The next wave of investment is going less into deflection bots and more into agent-assist, sentiment routing, and AI Actions - the kind of tool-using agents that book, refund, and reschedule on the customer's behalf rather than just answering questions.

6. 37% of businesses use chatbots for support, and they reply about 3× faster than human agents

The 3× speed multiplier is conservative for current-generation models. With streaming responses and parallel reasoning (GPT-5.5 Pro, Gemini 3.1 Ultra), first-token latency on most help queries lands well under a second, and full responses arrive faster than a human can finish reading the customer's last message. Speed alone is not a differentiator anymore - it is table stakes.

Performance: faster, cheaper, more accurate

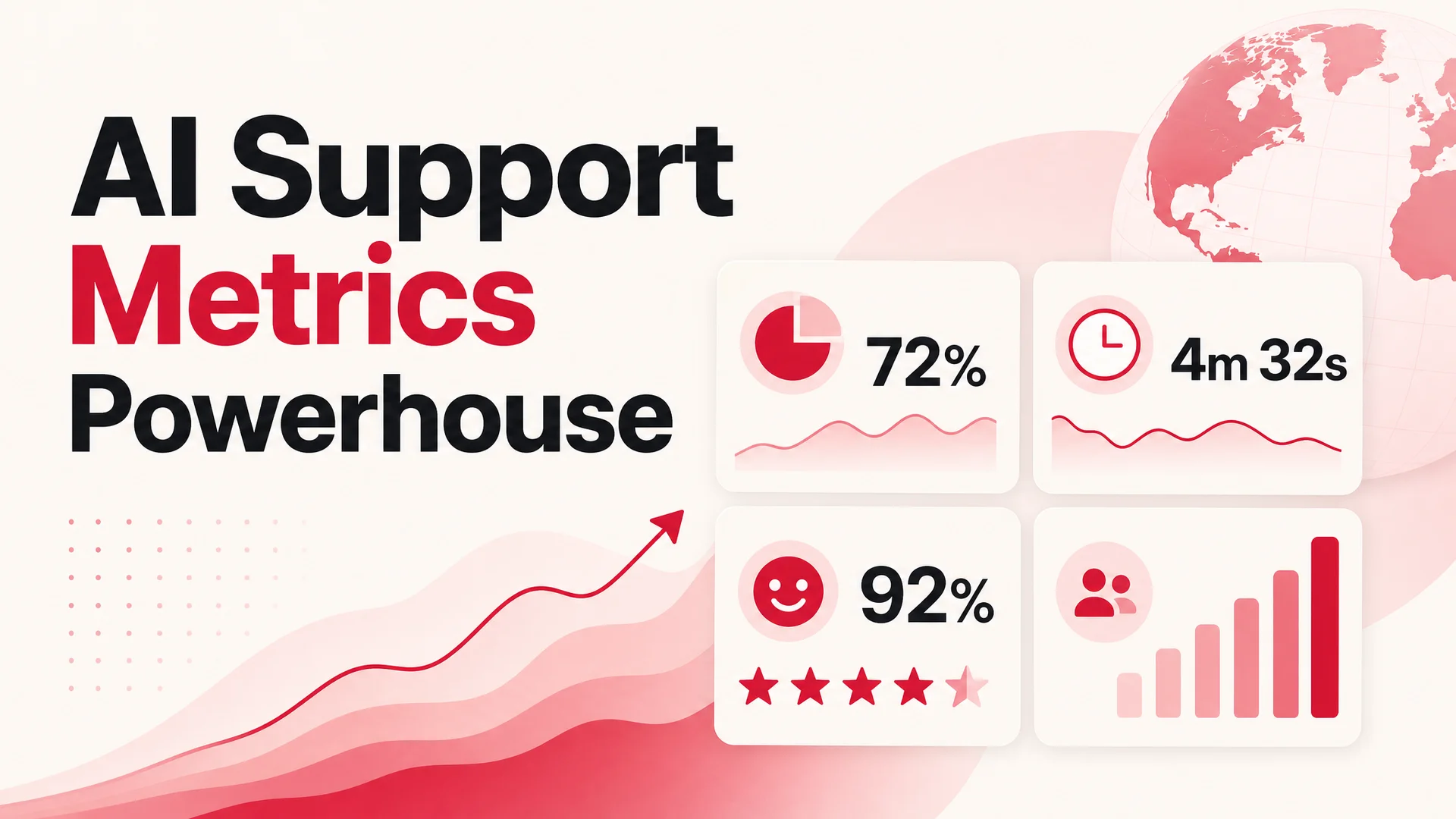

7. AI-driven support cuts average handle time by 40% and lifts CSAT by 30%

Handle time used to drop at the cost of satisfaction - fewer minutes per ticket, but a more frustrated customer. The current generation flips that. Models with 1M-token context (Claude Opus 4.6 / Sonnet 4.6, DeepSeek V4) can hold the whole customer history, the relevant policy docs, and the conversation in working memory, so the answer is both faster and more accurate. That is the rare AI gain that compounds in both directions.

8. Conversational AI reduces average handle time by 27% in agent-assist mode

The 27% number applies specifically to human agents using AI suggestions, not full deflection. This is the underrated half of the AI support story: even tickets that need a human end faster when the agent is reading model-generated draft replies, summarized history, and suggested next actions in a side panel. Most teams underbuild this half of the stack and leave 25% of the available efficiency on the table.

9. AI-powered tools cut resolution time by up to 50%

Half-time resolution comes from compounding three effects: instant first response, fewer back-and-forths because the model can ask better clarifying questions, and end-to-end automation through AI Actions. A Berrydesk agent that can actually look up an order, issue a partial refund, and reschedule a delivery - instead of telling the customer "I will pass this to a human" - collapses the long tail of ticket lifecycles.

10. AI chatbots resolve up to 86% of customer questions without a human

The 86% ceiling assumes a well-scoped knowledge base, a model with strong tool use, and good guardrails. With agentic models like Kimi K2.6 (which can run 12-hour autonomous sessions and coordinate up to 300 sub-agents) or Qwen3.6-27B (a 27B-parameter dense model that beats some 397B-param MoE rivals on agentic coding benchmarks), even multi-step workflows - refund + tracking lookup + reshipment - stay inside the bot.

11. AI can automate up to 43% of customer service tasks

Note this is tasks, not tickets. A single ticket usually contains several tasks: identity verification, history lookup, root-cause analysis, action, follow-up. AI rarely automates the entire ticket but reliably automates most of the steps inside it. That is why agent-assist adoption keeps climbing alongside full-deflection bots - they are complements, not substitutes.

12. AI improves query resolution speed by about 40%

This number lines up with what Berrydesk customers report once they move from a single-model setup to a routed setup: route easy, high-volume queries to DeepSeek V4 Flash or MiniMax M2 (about 8% the price of Claude Sonnet at roughly twice the speed), and reserve Claude Opus 4.7 or Gemini 3.1 Ultra for the genuinely hard ones. The aggregate latency drop comes from spending time only where it matters.

13. AI-powered sentiment analysis hits ~90% accuracy

Sentiment scoring has been a workhorse AI capability since long before frontier LLMs, but accuracy has crept up because models now understand sarcasm, hedging, and cultural register. The practical use is routing - escalating angry messages to senior agents, flagging churn-risk language to the retention team, and tagging high-NPS conversations for marketing.

Customer perception: warmer than the discourse suggests

14. 80%+ of customers report a positive experience with AI support

The narrative that customers hate bots is mostly residual from a 2018-era generation of decision-tree chatbots. Customers who get correct, fast, branded answers with no hold music report high satisfaction - often higher than for human-handled tickets. The variable is quality, not channel. A bad bot frustrates; a good one earns a thank-you.

15. 73% of customers believe AI will improve service quality

Customer sentiment toward AI support has flipped from skeptical to optimistic over two years. Part of that is exposure - most consumers now talk to ChatGPT or Claude weekly and bring those expectations to support interactions. They expect the brand's bot to know its own products at least as well as a generalist assistant.

16. 72% of business leaders say AI outperforms humans in some support interactions

The phrase "outperforms humans" has to be read carefully - it usually means specific dimensions: 24/7 availability, response speed, knowledge consistency, and tone calibration. Humans still win on judgment, novelty, and emotional escalations. The mature read of this stat is "AI is better at the parts of support that benefit from machine traits, and humans are better at the parts that benefit from human ones." That is also how you should staff.

17. 95% of customer interactions will be AI-assisted

The "AI-assisted" framing matters. This does not mean 95% of conversations replace humans - it means 95% touch AI somewhere in the loop, whether through bot triage, real-time agent suggestions, post-call summaries, sentiment tagging, or automated follow-ups. By that definition, the 95% figure already underestimates the present.

Outcomes: the bottom-line numbers

18. AI-driven escalation prevention cuts complaint volume by 65%

Escalations are expensive in both time and brand damage. AI prevents them in two ways: catching frustration in real time and adjusting tone or routing, and getting the answer right the first time so there is no second touch. The compounding effect on the operations side is significant - fewer escalations means fewer angry calls, which means fewer tier-2 hours, which means more capacity for proactive work.

19. AI-driven analytics reduce churn by up to 15%

The mechanism is proactive outreach. Models read signals - declining usage, support-ticket sentiment, billing friction - and surface accounts at risk before they cancel. The 15% churn reduction is conservative; some Berrydesk customers running tight integrations between the support agent and CRM data see retention lifts well above that on the cohorts they target.

20. 84% of organizations say AI improves issue-resolution speed; 55% report up to 25% faster resolution

The split between "improves" and "25% faster" matters. Not every AI support deployment hits big numbers - the organizations seeing 25%+ gains are typically the ones that did three things: connected the agent to real data sources (not just docs), enabled AI Actions (not just answers), and routed by query type (not just one-model-fits-all). The other 29% are still running v1 deployments and leaving most of the gains on the table.

What the 2026 model landscape changes

The statistics above were collected across a span where the underlying models kept getting better. In 2026, three specific shifts move the numbers further:

Long context windows make RAG optional. With Claude Sonnet 4.6 and DeepSeek V4 offering 1M-token context at no surcharge, and Gemini 3.1 Ultra at 2M tokens, you can fit an entire mid-sized knowledge base, the full conversation history, and policy documents in-context. RAG becomes a tuning lever for cost, not a hard architectural requirement. That removes a class of "the bot couldn't find the answer" failures that dragged on first-generation deflection rates.

Open-weight frontier collapses cost. DeepSeek V4 Flash, MiniMax M2, GLM-5.1, and Qwen3.6 are at or near closed-frontier quality on agentic coding and tool use, at fractions of the price. For a Berrydesk customer doing 50,000 monthly resolutions, the per-resolution cost on a routed setup is now well under a cent for the easy 80% of traffic. That changes which support workflows are worth automating - formerly marginal use cases now have clear ROI.

Agentic tool use makes AI Actions production-grade. The benchmark scores tell the story: Kimi K2.6 hits 58.6 on SWE-Bench Pro with 12-hour autonomous sessions, GLM-5.1 hits 58.4 and runs 8-hour plan-execute-test-fix loops, Claude Opus 4.7 leads at 64.3. These models reliably use tools - read an order, call a refund API, look up a tracking number, write back to the CRM - without the brittle prompt engineering that defined the 2024 generation. AI Actions in Berrydesk for booking, payments, and account changes are no longer a demo; they are the default deployment shape.

Pitfalls to watch on the way in

A few traps come up repeatedly in deployments that miss their targets:

- Single-model deployments. Running everything on the most expensive model wastes spend; running everything on the cheapest one tanks quality on the hard 20%. Route by query type.

- Knowledge bases that drift. A 2024 doc set will give 2024 answers. Wire your knowledge sources to refresh on a schedule, and let the agent flag confident-but-wrong answers back to you.

- No human handoff path. The fastest way to torch CSAT is to trap an angry customer in a bot loop. Always provide a clean escalation route, with full context handed to the human.

- Treating AI Actions as cosmetic. A bot that answers but cannot act ends every conversation with "please contact our team." That is half the value left on the floor.

Where to go from here

The data is consistent across sources, segments, and company sizes: AI in customer support is delivering measurable wins on speed, cost, deflection, and satisfaction, and the floor under those wins is rising every quarter as models improve. The teams getting the largest gains are the ones treating support AI as a routed, action-capable system - not a single chatbot bolted onto a help center.

If you want to see what a routed, action-capable agent looks like for your support stack, you can build one on Berrydesk in a few minutes - pick a model (Claude, GPT, Gemini, DeepSeek, Kimi, GLM, Qwen, MiniMax, and more), train it on your docs, websites, Notion, Drive, and YouTube, brand the widget, wire up AI Actions, and ship it to your site, Slack, Discord, or WhatsApp. Start at berrydesk.com.

Launch a branded AI support agent in minutes

- Train on your docs, websites, Notion, Drive, and YouTube

- Pick your model - Claude, GPT, Gemini, DeepSeek, Kimi, GLM, Qwen, MiniMax

Set up in minutes

Chirag Asarpota is the founder of Strawberry Labs, the team behind Berrydesk - the AI agent platform that helps businesses deploy intelligent customer support, sales and operations agents across web, WhatsApp, Slack, Instagram, Discord and more. Chirag writes about agentic AI, frontier model selection, retrieval and 1M-token context strategy, AI Actions, and the engineering it takes to ship production-grade conversational AI that customers actually trust.